When stepping outside.

Thunder overhead rumbles.

My glasses fog up.

Category : mountaintop musings

Reviews, rants, and academic polemics.

Scrooge.

Most people hate me.

Eek! Look! It’s a scary ghost.

Fetch me that prize goose.

A New Gaming / Movie Setup.

So I’m building a new gaming (and movie) setup for some of my existing gear. The configuration list is:

- Xbox Series X, mounted in

- ElecGear Wall Mount, with VESA mount pattern.

- VIVO wall-mount VESA arm (in white), which will be bolted to a leg of one of my white workbenches. EDIT: this was replaced with an Ergotron model, because the Vivo one suffered a structural failure:

…in the metal construction within two days.

- TCL C7k 50″ TV mounted on:

- iPad Pro, mounted on: Edit: This didn’t really work out, for reasons listed below.

- Kuxiu VESA / Ball Mount smart connector charging mount.

- Anglepoise-style suspension arm with 1/4″-20 ball head. EDIT: This really didn’t work out, as the fixed ball head is too weak to secure the sorts of weight it claims to handle.

- Arcon Mount Ball to 1/4″-20 adapter.

- Magsafe-style breakaway 90 degree USBC adapter, to prevent port strain. Edit: This was brilliant.

- Added to that a second straight USB-C magnetic connector to charge my heaphones in order to cut down on port wear, using the same 24-pin connector. Edit: This was also brilliant.

Now we play the waiting game for all the bits to arrive.

To provide some context, gaming is the major social activity I have, given the people closest to me live hours away, and Covid has denied me pubs, bars, restaurants, cinemas etc. The way we set things up is running a Facetime call on the iPad, floating in space to my side, and the Facetime audio going to bluetooth earbuds, worn under a gaming headset, for games where in-game chat doesn’t work. You get the full social aspect of LAN gaming in the same room.

Meeting your heroes.

Brunelling, Pt 2

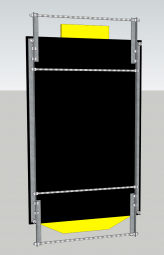

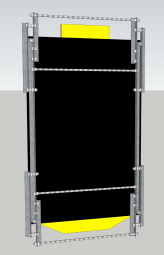

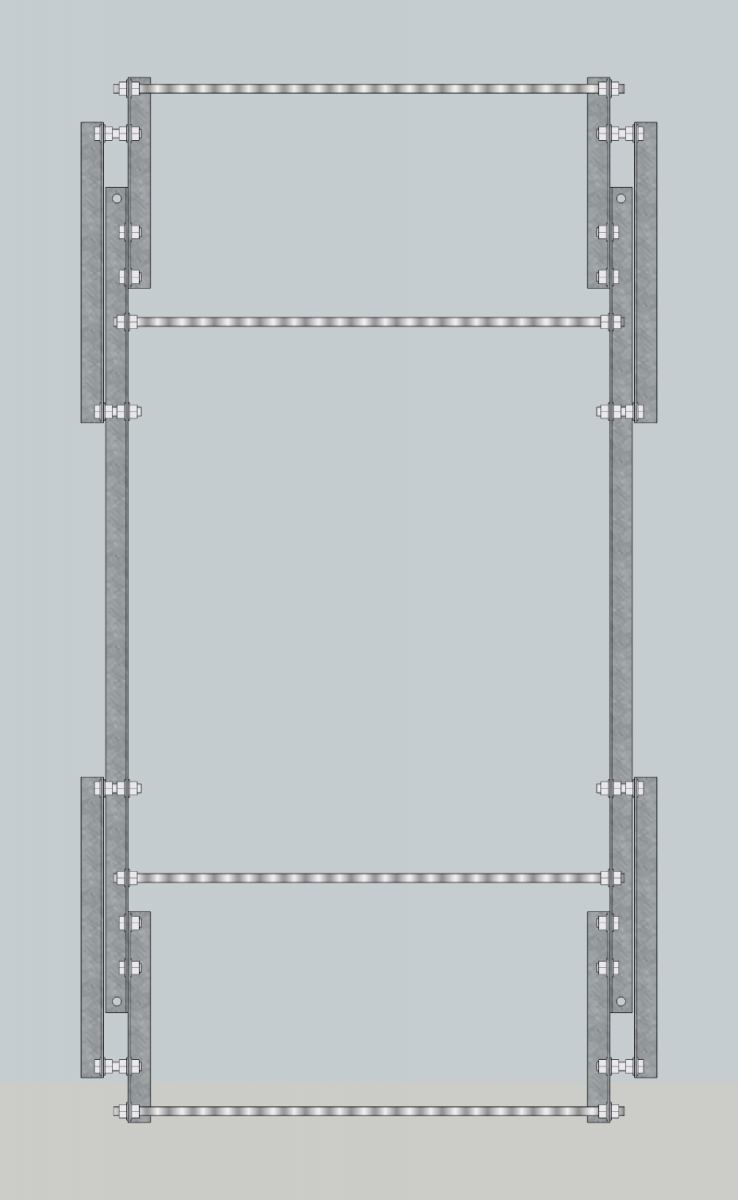

All the steel for the project, cut to size, and drilled to my specifications.

The paper is rubbings taken from the actual sculpture, which were used to get the exact positioning of the corner bolts on the work. We figured out that there was only 1mm variation from left to right with the positions of the bolts, which is gratifying to see.

Adding a couple of mm to the size of the holes, means everything can be lined up and there’s enough play in the structure.

Biblically Accurate…

Brunelling

For a couple of years, my work C45C4D3 (Cascade) was hanging in the Makerspace of Noosa Library. It went in before Covid, and it wasn’t until early 2024 that I was able, physically and emotionally to inspect it.

The results are not good. What the hanging system was doing, is bending the main board of the work, and putting a twisting pressure through the corner of the work at its weakest point (where the bolt is drilled through).

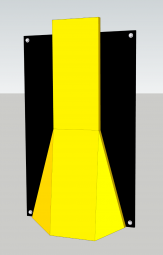

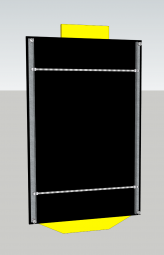

Knowing I needed a more robust solution, that would both relieve the board of flexing stress when it was in storage, and provide a better hanging option, I went in to Sketchup and started modelling. I ended up getting in touch with my inner Isambard Kingdom Brunel.

The final plan provides a modular structure, that requires only three distinct pieces of 50mm x 5mm angle in mild steel. They’re designed so that they’re symmetrical, and can be flipped end-over-end for left and right side applicability. Once its all affixed to the work, it should be protected when hanging, in storage, and during transport.

Lost in Time (Machine).

Gather ’round children, for a tale of a technological, and chronological trainwreck.

It was a dark, and stormy night…

…I was working on trying to bring some old Aperture Libraries into Capture One, and the imports were failing, with an error:

Catalog import failed. The Library is currently open.

Checking Capture One’s support site yielded only a support article about a lock file, but it was for Capture One catalogues not Aperture libraries.

What I should have done right here is file a support request with Capture One, wait for an answer, and stop work.

That sentence is in the voice of me as the protagonist narrator, over a still frame of me about to do the thing I most definitely should not have done.

What I did, as the footage resumes playing, was to try troubleshooting, so I could provide Capture One’s support people with an isolation of the problem.

My first thought was that the problem may be because the Aperture libraries that were failing were those which I had exported from my main Aperture catalogue running on my Mac Mini, while it was accessing images on a drive shared over the network from my Mac Pro workstation. Ok, so booting up the Mac Mini, enabling File Sharing on the Mac Pro, and then enabling the file Sharing user’s access to the photo drive…

Re-exporting the libraries confirmed the problem wasn’t a one-off.

Eventually, what I realised is I had to export the library to a local folder on the Mac Mini, then copy it to the Mac Pro. From there, the library opens fine in Capture One.

So, the problem is that when Aperture writes the library to a network location, it leaves a lock file in place, which trips up Capture One.

Problem solved; I exported all the particular libraries I wanted to re-process to a local drive on the Mac Mini, then copied them across to the Mac Pro, shut the Mac Mini down, and went to go back to Capture One on the Mac Pro.

I filled in a support ticket with Capture One’s support staff, to see if they’d encountered the problem, as I hadn’t been able to see it described in their support site.

As an aside; If you’re setting up a support site for a piece of software, why not try structuring your knowledge base of answers based upon the menu structure of the program. So if I have a problem with the function File > Import Catalogue > Aperture Library, let me navigate to that and see all the issues related to importing Aperture Libraries.

Knowing I wouldn’t need to continue having file sharing on, I disabled it, and then removed the sharing user from the Photo drive permissions.

Then Time Machine began to run… and continued to run… a lot longer than it usually does. Once it had finished, I noticed that rather than the 1.5TB of free space my Time Machine drive should have, there was now only 80GB of free space. Thinking backwards, the horror of what had happened began to dawn upon me (is this sounding like The Martian where he shorts the probe with the drill?).

By performing a recursive addition, and then removal of a user permission from my photo drive, I’d somehow caused Time Machine to think the entire drive needed to be backed up afresh. That may not have been so bad, except for a decision by Apple engineers; on a 4TB drive, there should be a minimum of 80GB of free space on a Time Machine backup. So despite the entire new backup fitting within the space available on the drive, Time Machine deleted two months of my oldest backups to free up that extra space.

I went into the commandline, and using the tmutil command, removed the new giant snapshot, but unfortunately that didn’t liberate any space on the drive. I ran Time Machine again to see if it would do the same huge backup a second time, and it didn’t, but still no liberation of space. Deciding I was tired of fighting with it, I decided to take the drive (and it’s twin which had avoided the problem) out of service entirely, they’re now stored in split locations having served only 14 months of use.

So, I brought in a new pair of drives, 8TB this time, and we’ll see if Time Machine can behave in a more orderly fashion this time around.

Eventually I heard back from Capture One’s support folks; the Aperture lock file is located in the Library’s package contents in:

Database/apdb/lockfile.pid

Removing that file solves the problem.

Removing Variables

Uniforms have been a part of my life for as long as I can remember. The primary school uniform; a simple blue collared shirt, and grey shorts. High school; a tie, blazer, and starch-stiffened straw hat. Scouts; a pseudo-militiary green shirt & shorts combination, with a neckerchief held together with the amusingly named “woggle”. Air Training Corps; an airforce-based version of Cadets, compulsory service while in High School, blue slacks, shirt, tie, navy scratchy woollen jumper, and a forage cap.

Post school I landed traumatised in the Goth scene, and subcultural uniforms became the rest of my life – something you never really grow out of if you were in a particular time in which subcultures were a thing.

And pathetically, many dedicated followers of fashion become kinkily attached to their adopted styles, and become Style-Mongers; promoting their narrow range of looking, speaking and thinking, as if all previous modes of being have been erased, and no alternative could possibly supplant the present one.

Tenacious style-mongering is always found among those too young to have experienced the inevitability of stylistic burnout. But in fact, the rise of new titillation, the peaking to saturation, the descent to tiresome, and finally the relegation to a timeless limbo of nostalgia is the fate of all styles.

Except, of course, Cubism.

Crosley Bendix – Style.

So it was when I decided part of de-cluttering life was removing daily pain-points like the way cotton t-shirts always seem to get deodorant marks in the armpits, and they’re never cut right – too boxy, or too short. Prior to travelling to Japan, I bought a few really nice marino-blend thermal shirts. They were expensive, but so much more pleasant, and practical than my normal wardrobe. Seeing the superseding model on sale recently at 50% off, I dropped the equivalent of three weeks rent on buying ten of them. I am living the uniform life. Not only uniform black, but literally the same shirt every day.

The other variable I decided to remove from life was bad sleep.

The fourth most expensive thing I’ve ever purchased – a memory-foam mattress and power-articulated bed frame.

It’s the best sleep I’ve ever had.

So there’s two major variables I’ve just edited out of life, what to wear, and will I get a good night’s sleep… Solved.

A new book!

I have a new book released today!

This was done as a quickie, while I was stalled on Day And Night, and reminded me of how well I’ve centred on a working production process to make tings like this. Total turnaround, given the images were already done, was about three days.